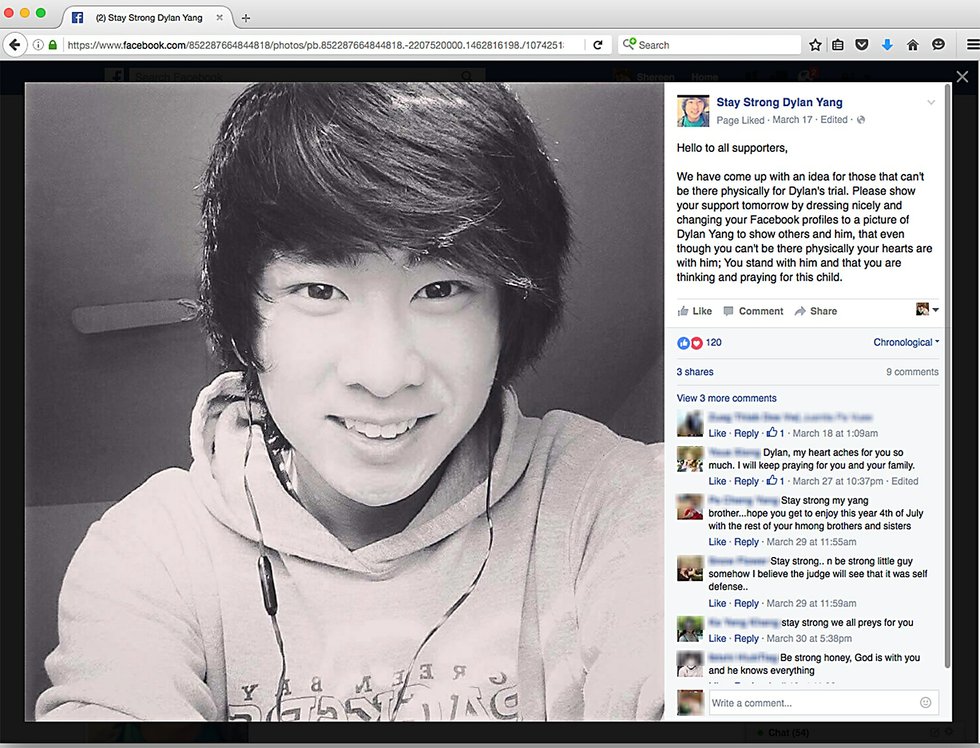

When 16-year-old Dylan Yang is sentenced in October for the fatal stabbing of a 13-year-old boy, Judge LaMont Jacobson would normally rely heavily on a report that determines Yang’s risk to the community. That report would help Jacobson decide whether to send Yang to prison, or offer an alternative sentence such as probation for his crime.

Facebook screen grab

Dylan Yang

A Facebook page in support of Dylan Yang has thousands of members.

But that methodology could change, as stark questions are being raised over whether the software-based Correctional Offender Management Profiling for Alternative Sanctions, or COMPAS, assessment, is biased against both men and non-whites. Yang’s case has already been clouded by whispers of racial bias, as many of his supporters believe he’s being unfairly treated because he is Hmong.

Since 2011, COMPAS has been used routinely by judges in all Wisconsin counties, says Dept. of Corrections spokesman Tristan Cook, and was a factor in more than 56,000 Wisconsin cases in 2015. Once a defendant is convicted of a felony anywhere in Wisconsin, the DOC attaches a completed assessment to the confidential presentencing report given to the sentencing judge.

Later this month, the state’s highest court is expected to rule on a case that questions the scientific validity of the COMPAS assessment and questions the use of racial and gender-specific questions. Federal civil rights laws prohibit judges from relying on race or gender when determining an appropriate sentence for convicted criminals.

Related: Sentencing delayed in teen stabbing case

Marathon County stopped using COMPAS earlier this year after the Supreme Court agreed to hear the case, says Deputy District Attorney Theresa Wetzsteon. The high court’s decision will ultimately determine whether Marathon County will reinstate the tool or stop using it altogether.

Contributed photo

Theresa Wetzsteon

Theresa Wetzsteon

“Obviously, we are very much aware that there have been concerns raised, and I am very interested to see what the Supreme Court will decide,” says Wetzsteon, who is the sole candidate in the election to replace outgoing District Attorney Ken Heimerman this fall.

Risk assessment tools can be useful, Wetzsteon says, but only when the people who use them are properly trained and the tools themselves validated.

“You can’t just take a person and plug in a bunch of factors to come up with a prediction for the future,” Wetzsteon says. “You have to allow for other factors to come into play.”

Before COMPAS software was introduced, judges and corrections officers manually assessed the risk a defendant poses to the community and the likelihood he or she will commit another crime. Those efforts collected the same family, legal, school and community background as COMPAS, but allowed for more subjective, personal and discretionary input than a computer-generated set of questions, according to COMPAS critics.

The high court ruling, which could come at any time, would be among the first to speak to the legality of such automated risk assessments. If the court rules against using COMPAS, it won’t be used in sentencing Yang, who was convicted in March of fatally stabbing Isaiah Powell in a fight between rival groups of friends.

Related: Juvenile crime, adult time

COMPAS also assesses nearly two dozen so-called “criminogenic needs” that relate to the major theories of criminality. For example, it factors in criminal personality, social isolation, substance abuse and stability in residence. It ranks defendants low, medium or high risk in each category, then spits out a “risk score” seen only by judges and attorneys. Today, it is among the most widely used assessment tools in the country.

Critics of the program point to a 2007 University of California study that concluded there was little to no evidence that such assessments provide an accurate prediction of recidivism. In May, a scathing ProPublica analysis showed that the tool is remarkably unreliable in forecasting violent crime: just 20% of those predicted to re-offend actually do.

The ProPublica analysis also found significant racial disparities. In forecasting who would be most likely to commit a future crime, the COMPAS algorithm made mistakes with black and white defendants at roughly the same rate, but in very different ways, according to ProPublica. The formula wrongly labeled non-white defendants as future criminals at almost twice the rate as white defendants. White defendants were mislabeled as low risk more often. The disparity could not be explained by other factors.

Northpointe, the for-profit organization that created COMPAS, strongly opposes ProPublica’s findings, and says its studies show COMPAS has an accuracy rate of about 70%.

The debate stems from a 2013 La Crosse County case, when a judge relied on COMPAS to sentence Eric Loomis to six years in prison for taking a vehicle without the owner’s consent and fleeing an officer. At Loomis’ sentencing, the judge said the COMPAS assessment pegged Loomis as highly likely to commit another crime, justifying the unusually severe prison term. Loomis appealed, saying the judge unfairly sentenced him to a longer term solely because of the COMPAS result. Loomis’ attorneys also argue that COMPAS violates a defendant’s right to due process.

Part of the problem is the shroud of secrecy surrounding the assessments. Northpointe does not publicly disclose the questions or the calculations, and copyright laws shield the program from open records laws. That means defendants and lawyers can’t test the validity of COMPAS, and the Dept. of Corrections cannot be compelled to release the COMPAS database to the public. An open records request to review the database was denied.

The appeals court declined to rule on the Loomis case, instead asking the Supreme Court to weigh in on the matter, and the case was argued in April. In a September 2015 filing, the appeals court noted that “race and gender are improper factors” and should not be relied upon in sentencing. Further, the court said if defendants are unable to test the validity of such assessments, then due process questions arise.